How Can Accepted Fair Lending Frameworks Help You Create a Machine Learning Governance Foundation?

Big data, machine learning (ML), and artificial intelligence (AI) present incredible opportunities for businesses. But they also present big questions about how to implement and monitor these technologies to ensure that they’re treating customers fairly. Compounding this problem is the fact that — outside of credit, housing, and employment — there are not clear frameworks for fair deployment of these technologies in most industries.

If you’re interested in taking a deeper dive into the world of Fair Lending, make sure to read our previous post.

SolasAI provides a rigorous fairness framework that is already used in employment, housing, and fair lending and makes it easy to implement in all other contexts. We believe that any company concerned about testing and mitigating the robustness and fairness of their algorithmic models should strongly consider adopting this framework; SolasAI is the right software to help your business leverage your data and models while proactively identifying and reducing your downside risk.

Machine Learning: Opportunity & Risk.

In the last few years there has been significant discussion about how big data and powerful new machine learning algorithms can create value for businesses. Your company may already be deploying these tools and be seeing impressive results. Companies and institutions in insurance, medicine, education, and countless other fields are implementing machine learning and artificial intelligence at a dizzying pace. They’re using these powerful algorithms to recognize faces, score performance, calculate risk, and perform countless other tasks. Impressive new applications are announced and launched virtually every day.

However, there are also growing concerns around the concept of algorithmic fairness. Journalists, academics, and consumer groups have documented a number of examples of algorithms treating people unfairly or acting in surprising or in unexpected ways:

- The New York Times has reported on numerous instances of algorithmic bias over the last few years. For example, a story about facial detection algorithms that performed significantly better on white men than women of color received national attention.

- A paper published in 2020 by researchers at Stanford and Georgetown demonstrated differences in performance of common voice recognition algorithms that at least arguably have racial and gender components.

- The Partnership on AI is building an AI Incident Database documenting instances of algorithms generating unexpected outcomes — some as dramatic as deaths and false arrests.

These issues have started to catch the attention of regulators and lawmakers in recent years.

- The FTC published a blog post on April 19, 2021 emphasizing the importance of algorithmic fairness and measuring discriminatory outcomes. The blog post noted its broad applicability across industries and that the FTC would hold bad actors accountable.

- The United States House of Representatives Task Force on AI has been holding hearings with the goal of crafting legislation governing the use of algorithms.

- The Algorithmic Accountability Act was introduced in the last Congress and has over 30 sponsors in the House and Senate. The Act would require companies to conduct impact assessments on the data and algorithms they use.

There have been a few examples abroad of countries taking concrete actions to address these concerns — most notably the European Union. In the United States, however, there is no such concrete framework outside of a few highly regulated contexts — namely employment and consumer lending.

The consumer lending industry has adopted a rigorous fair lending framework over the last three decades in response to legislation, regulations, and judicial decisions. Over the past decade, this fair lending framework has been adapted and expanded to respond to the growing use of big data and machine learning algorithms. In a nutshell, the fair lending framework is as follows:

A Prohibition on consideration of protected class status (race, ethnicity, gender, etc.). When consideration of these protected characteristics is appropriate requires careful consideration. For example, considering gender may be appropriate in a model predicting the likelihood of certain medical conditions, but would not be appropriate for determining whether or not to extend credit to an applicant.

A determination of whether the ML model is combining variables to construct a proxy for protected class status. It has become common practice for companies to use AI to review or sort resumes submitted by job applicants. However, one company found that its resume review algorithm was using data within resumes to predict whether an applicant was female and then discriminating against those female applicants. The data the algorithm was using seemed innocuous enough to the company — for example, sports played and universities attended — but was enough for the algorithm to begin proxying for gender.

A statistical analysis to determine whether the ML algorithm results in disparate impact on members of protected groups. Disparate impact means that a model generates positive outcomes for members of a protected group at a lower rate than for those not in the protected group. For example, many banks use machine learning models to determine whether or not to extend credit to individuals. Such a model might extend credit to African Americans at a lower rate than to white Americans. Disparate impact is not per se illegal. However, if disparate impact is present, it necessitates further analysis: courts and regulators will ask what the business justifications are for a model with disparate impact and whether a model with a lower level of disparate impact could be developed.

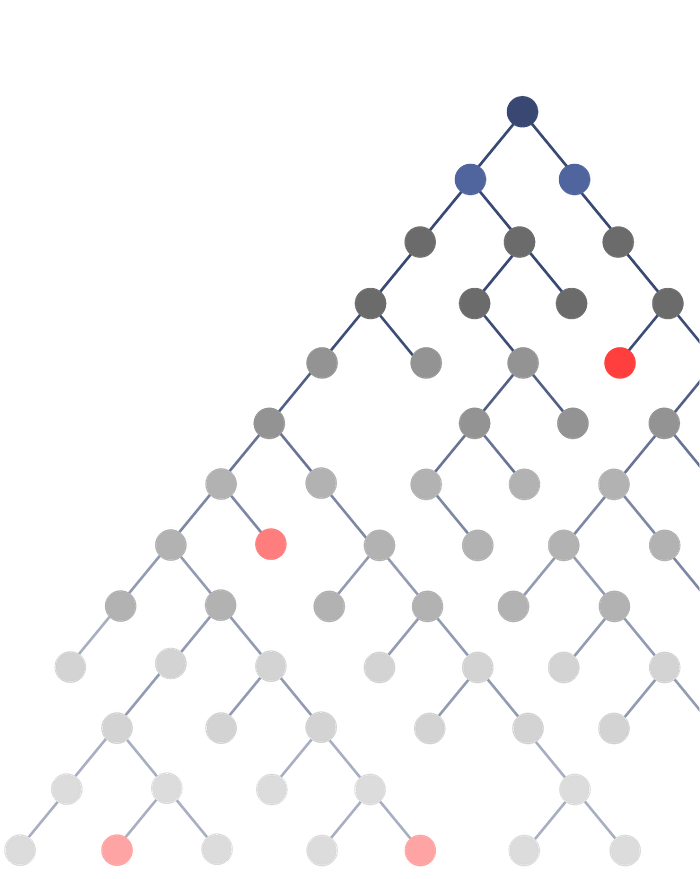

If the algorithm does result in disparate impact, search for a less discriminatory alternative model by training the algorithm on different subsets of the data. As discussed above, the consumer lending industry has implemented some of the most sophisticated machine learning algorithms available and arguably the most robust fairness checks of any industry. When they detect a disparate impact in their algorithms (which they regularly do), these financial institutions look for tweaks to this baseline model to find a less discriminatory alternative model. However, this task has grown more difficult as datasets have grown larger and algorithms have become more complex.

The Difficulty of Fair Lending Analysis in the World of Big Data and ML.

As the size of datasets has grown and machine learning algorithms have become more sophisticated, conducting fair lending analysis has become more and more difficult for lenders. For example, a dataset with only 40 variables has over a trillion subsets of variables that could be used to train a machine learning algorithm; a dataset with a few hundred variables has more potential subsets of variables than there are atoms in the known visible universe. Searching for a less discriminatory alternative in this context becomes exponentially more difficult. Additionally, while promising new techniques to “de-bias” algorithms have been developed, the legality of many of these approaches is questionable at best.

SolasAI is a first-of-its-kind solution to this problem. Developed by BLDS, LLC — a consultancy with five decades of experience advising on issues of algorithmic fairness — and cutting edge data scientists, SolasAI provides innovative solutions to both this problem and other issues that the use of machine learning poses.

SolasAI starts with a business’ existing model and evaluates it according to widely accepted standards. If disparate impact is found, SolasAI trains new models, using AI and optimization processes to learn which variable (or combinations of variables) are driving the disparity.

It then leverages this knowledge to iteratively search for the best alternative models.

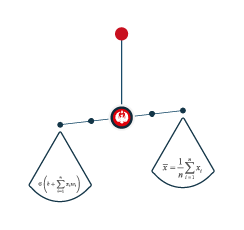

At the end of the process, the business is presented with a selection of alternative models that clearly demonstrates the tradeoff between fairness and accuracy. This allows businesses to easily choose the model that meets their performance requirements while maximizing fairness.

Traditional solutions to these problems require costly teams of data scientists and time intensive manual searches for alternative models that are likely insufficient in the face of such large datasets and sophisticated machine learning models. SolasAI automates all of this in a software package that plugs right into a business’ existing pipeline.

Why you should adopt the fair lending standards in your business.

Because of the growing complexity of machine learning algorithms and the increasing scrutiny of legislators, regulators, journalists, academics, and consumer groups, BLDS and SolasAI encourage companies outside of consumer lending to consider adopting (and adapting) standards similar to those used in fair lending. These standards are rigorous and widely accepted — their adoption would allow any business to respond to journalists, academics, or activists who raise concerns of unfair or discriminatory algorithmic practices in the future. If you want to get started our fair lending checklist is a great place to start: SolasAI Fair Lending Checklist.

In the absence of any specific legislation or regulation governing the use of machine learning algorithms, these standards would also allow businesses to respond to courts, lawmakers, and regulators in a way that is already widely accepted by these legal and regulatory actors. And in the event that specific legislation is adopted in the future, these standards will, at the very least, provide a firm foundation for any future compliance requirements. In a word, BLDS and SolasAI believe that adopting these best practices from the fair lending context will allow businesses to get ahead of any issues that may arise in the future.

Our fair lending checklist provides a quick start on your journey: SolasAI Fair Lending Checklist.

BLDS and SolasAI can help your business implement these standards and provide the technical software that will allow your business to understand its models better and build less discriminatory models that still meet your business needs. In this process, we work closely with our customers to understand their models and the unique requirements of their specific industry.

Tradition of Excellence

SolasAI was originally developed by BLDS, the industry leading employment discrimination and fair lending consultancy.

- BLDS developed fairness and discrimination analysis based on statistical methods for testing for evidence of discrimination.

- These techniques are now widely used and generally accepted by regulators and in courts.

- BLDS has provided expert testimony in many pivotal employment discrimination cases.

- BLDS advises numerous regulatory agencies, including the Department of Justice, Department of Labor, Federal Trade Commission (FTC), and the Consumer Financial Protection Bureau (CFPB).

Although BLDS developed these tools in the context of assessing discrimination in labor markets, many of the leading lending institutions turned to BLDS in the mid-1990s to ascertain how to comply with fair lending laws when using statistical models. Today, nearly every large lending institution in America uses some version of these methods to assess and mitigate disparate impact risk.

Now all of this expertise is available in a powerful and easy to use software product: SolasAI.

References

- https://www.pnas.org/content/pnas/117/14/7684.full.pdf

- https://incidentdatabase.ai

- https://financialservices.house.gov/news/documentquery.aspx?IssueID=126830

- https://www.congress.gov/bill/116th-congress/house-bill/2231/all-info

- https://digital-strategy.ec.europa.eu/en/library/proposal-regulation-laying-down-harmonised-rules-artificial-intelligence